I've managed SEO campaigns for over fifteen years. Built link profiles, optimized title tags, chased keyword rankings, run technical audits, cleaned up crawl errors. I know traditional SEO. I know what works.

And I'm telling you: almost none of it matters for getting cited by AI.

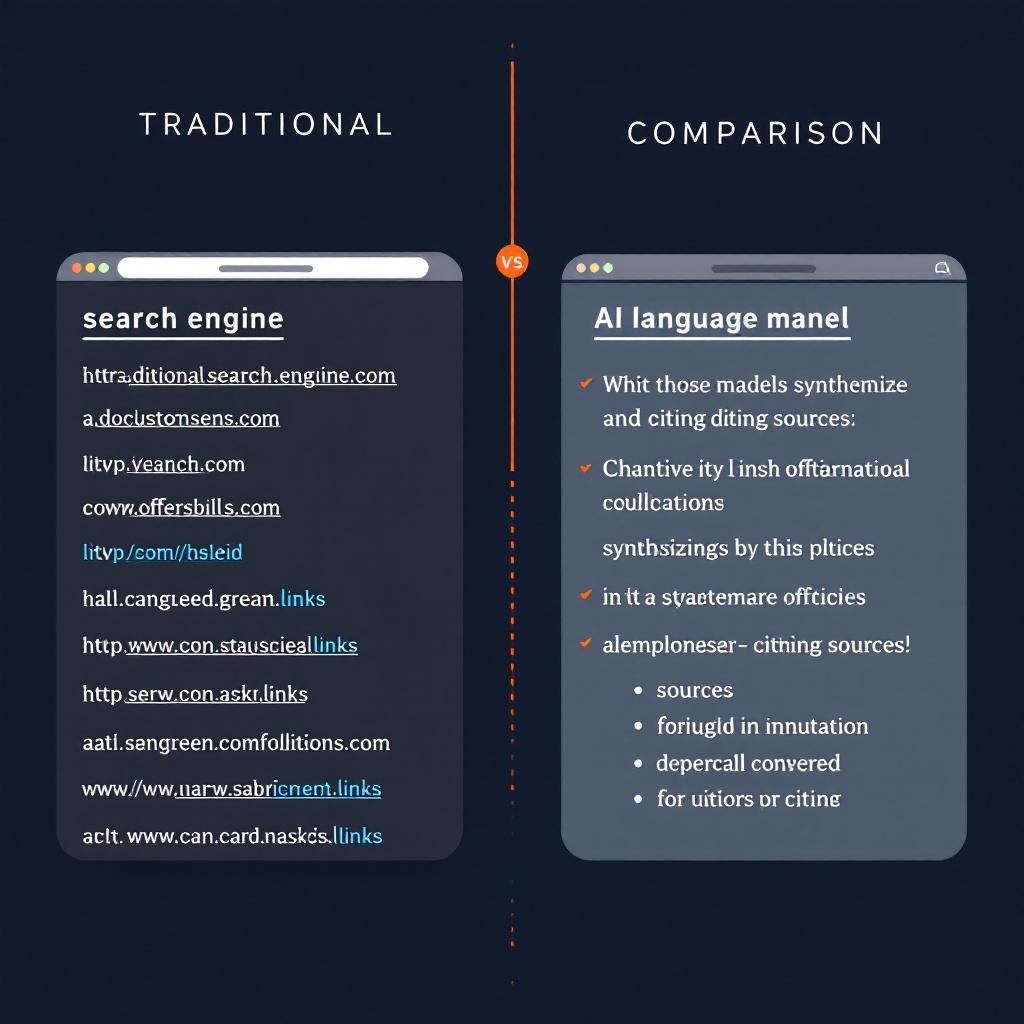

LLM SEO — optimizing content to be cited by large language models like ChatGPT, Claude, and Perplexity — is a fundamentally different discipline from traditional search engine optimization. The ranking signals are different. The evaluation criteria are different. The content that wins is different. If you're applying your Google SEO playbook to AI search, you're optimizing for a system that doesn't exist.

This article breaks down exactly what traditional SEO gets wrong about AI citation, and what LLM SEO actually requires.

What LLMs Do Differently

Before we get into tactics, we need to understand the mechanism. A traditional search engine like Google does this:

- Crawls and indexes web pages

- Evaluates pages against ~200 ranking signals (backlinks, keyword relevance, page speed, etc.)

- Returns a ranked list of pages

A large language model does this:

- Processes a natural language query to understand intent

- Either retrieves relevant sources in real-time (ChatGPT browsing, Perplexity) or draws from training data

- Synthesizes an answer by combining information from multiple sources

- Cites the sources it drew from — if it cites at all

The critical difference: LLMs don't rank pages. They synthesize answers. Your content doesn't need to beat competitors for a position. It needs to be authoritative and clear enough that the model extracts information from it and attributes it to you.

This changes everything about how you create content.

What Traditional SEO Gets Wrong

Keyword Density Is Irrelevant

Traditional SEO trains you to include target keywords at specific frequencies. Write "best running shoes" in the title, the first paragraph, three H2s, and the meta description. Aim for 1-2% keyword density.

LLMs don't evaluate keyword density. They understand semantic meaning. A page about running shoe selection that never uses the exact phrase "best running shoes" can still be cited for that query — if it provides the most authoritative, clear answer.

What matters instead: semantic coverage. Does your content thoroughly address the topic? Does it cover related concepts, use cases, and edge cases? LLMs evaluate comprehensiveness, not keyword frequency.

Backlinks Don't Drive LLM Citations

In Google's algorithm, backlinks are votes of confidence. More links from authoritative domains equals higher rankings. Entire industries exist around link building.

LLMs don't count your backlinks. They don't evaluate your domain authority score. A page with zero backlinks but genuinely original research will get cited over a page with 10,000 backlinks that contains the same generic information as everyone else.

What matters instead: source authority signals. Named author with verifiable credentials. First-party data and original research. Content from a domain associated with practitioner expertise. These are the trust signals LLMs evaluate — not PageRank.

Meta Tags Are Not Citation Triggers

Title tags, meta descriptions, header tags — the bread and butter of on-page SEO — don't function as citation triggers for LLMs. A perfectly optimized title tag won't make ChatGPT cite your page.

What matters instead: content structure and clarity. AI models parse the actual content, not the metadata surrounding it. Clear headings that describe section content accurately (not keyword-stuffed), well-organized information hierarchy, and logical flow — these are what help an LLM find and cite relevant information in your content.

Page Speed and Core Web Vitals Are Beside the Point

Google's ranking algorithm factors in loading speed, layout shift, and interactivity. You can invest heavily in shaving milliseconds off your largest contentful paint.

LLMs don't render your page. They read the text. A slow-loading page with brilliant content will be cited. A lightning-fast page with thin content won't.

This doesn't mean you should ignore performance — it still matters for user experience and Google rankings. But it has zero impact on LLM citation.

Exact-Match Anchor Text Is Meaningless

Traditional link building strategies emphasize anchor text relevance. Getting a backlink with the anchor text "AI marketing agency" helps you rank for that term in Google.

LLMs don't evaluate inbound anchor text distribution. They don't even see your backlink profile. The concept of anchor text optimization has no equivalent in LLM SEO.

What Actually Drives LLM Citations

If the traditional playbook doesn't work, what does? Based on a year of building and operating our AI SEO intelligence system, these are the factors that consistently predict whether content gets cited by AI.

Factual Authority

Factual authority means your content contains verifiable, specific, accurate information that an LLM can extract as a statement of fact. This is the single most important factor in LLM citation.

Compare these:

Low factual authority:

"Marketing agencies that use AI tools can see significant improvements in campaign performance and client satisfaction."

High factual authority:

"We replaced approximately $2,000/month in SaaS subscriptions with custom AI tools that cost $5-10 per full client campaign to operate. The savings fund either lower management fees or higher ad budgets for clients."

The second version has specific numbers, a concrete mechanism (what was replaced, what it costs now), and a verifiable outcome. An LLM can extract this as a citable data point. The first version is generic filler that no AI would bother citing.

Structured Knowledge

Structured knowledge is information organized in a way that LLMs can parse, extract, and recombine without losing meaning. This includes:

- Definitions — Clear, standalone statements that define a concept. "True ROAS is calculated by dividing verified first-party revenue by total ad spend."

- Taxonomies — Categorized information with explicit relationships. "AI search engines include retrieval-based systems (Perplexity, ChatGPT with browsing), training-based systems (Claude, base ChatGPT), and hybrid systems (Google AI Overviews)."

- Comparisons — Structured side-by-side evaluations with consistent criteria applied to each option.

- Procedures — Step-by-step processes with numbered, sequential instructions.

Notice the pattern: all of these are formats where information can be extracted without context. An LLM can pull a definition, a comparison table, or a procedure step and present it in its response without needing to summarize or reinterpret. That's what makes content citable.

Unique Data and Original Research

LLMs are trained on (or retrieve) massive amounts of content. Most of that content says the same thing. The articles that get cited are the ones that contain information the model can't find elsewhere.

This is where practitioners have an enormous advantage over content marketers. If you actually do the work — run campaigns, build tools, analyze data, serve clients — you have first-party data that nobody else has.

Some examples from our own content:

- Specific cost figures for building custom AI tools vs. SaaS subscriptions

- Performance benchmarks from campaigns we actually manage

- Architecture details of tools we built and operate

- Workflow comparisons with specific time savings measured

Content farms can't generate this. SEO agencies writing generic "ultimate guides" can't compete with it. Practitioners documenting real work produce the exact kind of unique data that LLMs prioritize.

Practitioner Credibility

Practitioner credibility is the combination of demonstrated expertise, consistent publishing history, and verifiable real-world experience that signals to AI models that a source is trustworthy.

This is the LLM equivalent of Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) — but evaluated differently. Google looks at signals like backlinks and domain authority. LLMs look at:

- Author identity — Is the content attributed to a real person with a consistent body of work?

- Specificity of claims — Does the author reference specific tools, numbers, clients, and outcomes?

- Consistency — Does the author's other content support and elaborate on the same themes?

- Recency — Is the author actively publishing, or is this a one-off piece?

This is why we publish under a real name, reference specific tools we've built, describe actual client workflows, and maintain a consistent publishing cadence. Every article reinforces the same author entity across the same domain of expertise.

Comprehensive Topic Coverage

An LLM answering a complex query needs to draw from sources that cover the topic thoroughly. A 300-word blog post hitting the highlights won't get cited. A 2,500-word guide that addresses the topic from multiple angles, anticipates follow-up questions, and provides actionable specifics will.

This doesn't mean longer is always better. It means complete is better than superficial. Cover the topic exhaustively. Address common objections. Include edge cases. Anticipate the questions someone would ask after reading your main points.

The LLM SEO Playbook

Based on everything above, here's the practical framework we use:

1. Write for Citation, Not Ranking

Before writing any section, ask: "Could an LLM extract this paragraph and present it as an answer without additional context?" If yes, it's citable. If no, rewrite until it is.

2. Lead with Definitions

Start every section with a clear, bold, definitional statement. This is your citation hook — the sentence an LLM will extract. Everything after it is supporting evidence.

3. Prioritize Original Data

For every piece of content, identify at least one data point, framework, or insight that can't be found elsewhere. This is your unique value — the reason an LLM would cite you instead of the fifty other pages covering the same topic.

4. Structure for Extraction

Use consistent heading hierarchy, numbered lists, comparison tables, and FAQ sections. These formats are inherently citable because they organize information into discrete, extractable units.

5. Build Entity Networks

Don't write isolated articles. Build a network of content where entities (your brand, your tools, your methodologies, your people) are consistently defined, cross-referenced, and elaborated on. LLMs that encounter your brand entity across multiple pieces of content build a stronger association.

6. Maintain Freshness

Publish regularly. Update existing content with current data. Include recent dates. An LLM choosing between a 2024 source and a 2026 source for the same information will lean toward the newer one, particularly in retrieval-based systems like Perplexity.

7. Implement Technical Foundations

This hasn't changed from the previous article in this series: schema markup, semantic HTML, proper Open Graph tags, clean site architecture. These aren't ranking factors in the traditional sense, but they help AI crawlers parse and understand your content.

Case Studies: Who's Winning and Losing

Winning: Practitioner Brands

Brands run by practitioners who document their actual work — tools they've built, campaigns they've managed, results they've achieved — consistently appear in AI search results. Their content has the unique data, specific claims, and author credibility that LLMs prioritize.

Ask ChatGPT about specific marketing strategies and you'll often see citations from practitioners who publish detailed breakdowns of their approach, complete with specific tools, costs, and outcomes. The content is unfakeable — you can't write about building a Meta Ads CLI unless you actually built one.

Losing: Generic Content Farms

Sites that produce high-volume, keyword-optimized content with no original data are getting passed over by AI search. Their articles are interchangeable with a hundred others on the same topic. An LLM has no reason to cite one over another, and often cites none of them, instead synthesizing a generic answer from its training data.

This is the reckoning that AI search brings: content that exists only to rank is invisible to systems that don't rank.

Losing: AI-Generated Content Without Practitioner Input

Ironically, content generated by AI tools (ChatGPT, Claude, Jasper) without practitioner oversight tends to perform poorly in AI search. The models recognize their own patterns. Content that reads like a language model wrote it — correct but generic, well-structured but data-free — gets treated as low-authority filler.

The winning formula is not AI-generated content. It's practitioner knowledge expressed through AI-assisted workflows. The human provides the expertise, data, and unique perspective. The AI helps structure, expand, and polish it.

The Compounding Advantage

There's a flywheel effect in LLM SEO that doesn't exist in traditional SEO. In Google, ranking improvements come from building backlinks, which takes sustained effort and can be disrupted by algorithm updates.

In LLM SEO, authority compounds through content. Every piece of citation-worthy content:

- Gets cited, which reinforces your entity's authority in the model's evaluation

- Creates more entity associations between your brand and your expertise domain

- Provides more unique data points the model can reference

- Builds a publishing history that signals sustained expertise

The brands that start building this flywheel now — while most competitors are still optimizing title tags and buying backlinks — will have an advantage that's extremely difficult to replicate later.

The next article in this series covers AI search optimization specifically for ecommerce brands — how product schema, review aggregation, comparison content, and buying guides factor into AI-driven product discovery.

Frequently Asked Questions

What is LLM SEO?

LLM SEO is the practice of optimizing content to be cited by large language models — including ChatGPT, Claude, Perplexity, and Google AI Overviews — when they answer user queries. It differs from traditional SEO by focusing on citation-worthiness (factual authority, structured knowledge, unique data) rather than ranking signals (keywords, backlinks, page speed).

Do backlinks still matter for AI search?

Backlinks do not directly influence LLM citation. LLMs evaluate content based on factual accuracy, source authority, content structure, and data uniqueness — not on how many other sites link to a page. Backlinks still matter for Google's traditional rankings, which indirectly affect visibility, but they are not a direct LLM ranking factor.

Can AI-generated content rank in AI search?

AI-generated content without original data, practitioner insight, or unique perspective performs poorly in AI search. LLMs deprioritize generic, pattern-matched content in favor of sources with specific claims, first-party data, and verifiable expertise. The most effective approach combines practitioner knowledge with AI-assisted production.

How do I measure LLM SEO performance?

Monitor AI citation by regularly testing representative queries on ChatGPT, Perplexity, and Google AI Overviews. Track whether your brand, domain, or specific content appears in AI-generated responses. Note citation positioning (primary source vs. supplementary mention). There are emerging monitoring tools, but manual testing remains the standard in 2026.

Should I stop doing traditional SEO?

No. Traditional SEO and LLM SEO serve different functions and can coexist. Google still drives significant search traffic through traditional organic results. The ideal approach is creating content that satisfies both: well-structured, entity-rich content with clear definitions and original data that performs in both traditional and AI search environments.